AI-NATIVE APPLICATION PENETRATION TESTING

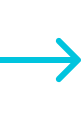

Attack Your AI Systems Before Attackers Do

Find out whether attackers can manipulate your AI applications to access sensitive data, bypass safeguards, or misuse automated workflows.

Assess your AI security exposure.webp)

.svg)

.png)

.webp)

-p-2000.webp)

.png)