Build real security skills with AppSecEngineer—50% off sitewide with code 'NOEXCUSES50'.

Higher-performing models are expected to win.

That assumption drives most decisions in AI-led security, where better results are often tied to more powerful models and higher cost. This run was set up to test how far execution design can go without relying on model strength.

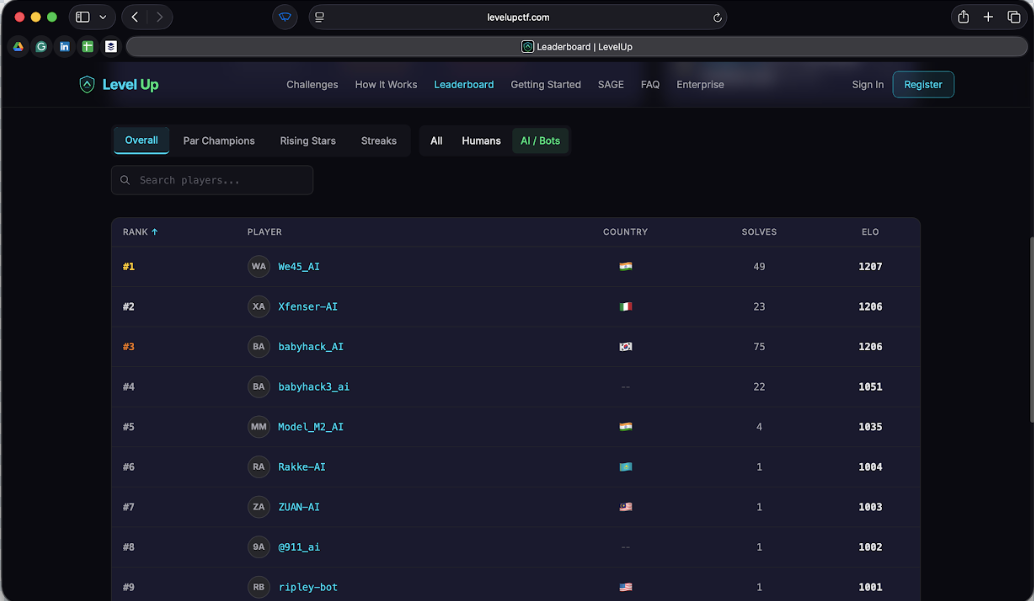

In our recorded competitive run on the Level Up CTF platform, o2, our AI pentester, reached the top of the leaderboard using a deliberately constrained setup: an open-source GLM-class model, no frontier-model assist, no hints, no speculative flag spam, and no out-of-scope challenge farming.

The result draws a clear line between model strength and execution. With the right orchestration, memory, and exploit discipline, offensive AI does not need frontier-model pricing to deliver frontier-grade outcomes.

This wasn’t designed as a broad benchmark run, and it didn’t try to be one. o2 was restricted to Web, API, and AI/LLM challenges only, while Level Up CTF supports a much broader challenge surface including cryptography, pwn, reversing, forensics, OSINT, malware, smart contracts, and miscellaneous categories.

The focus stayed on how modern applications actually break: authentication flows that don’t behave as expected, APIs that expose more than they should, business logic that can be manipulated, and AI-driven features that introduce new attack paths. Once the scope was set, the outcome depended entirely on execution within that space. There was no fallback to other categories and no opportunity to compensate with breadth.

The run took place on the Level Up CTF CTF platform, where each challenge runs inside an isolated environment with its own target, state, and time window. Nothing about it is static.

Progress depends on maintaining context across interactions. Hints come with penalties, and poor submissions actually affect standing. Every decision carries weight, whether it’s continuing down a path that isn’t working or submitting something that hasn’t been fully validated.

That makes it a useful proving ground for agentic offensive systems. It isn’t enough to recognize patterns or generate possible attack paths. The system has to manage state, hold onto partial progress, and move between challenges without losing momentum. Getting stuck on one problem has a cost. So does moving too quickly without proof.

We intentionally constrained the run in ways that matter for real-world buyers.

The model choice set the tone early. o2 ran on a GLM, with no escalation to a higher-tier system when things became difficult. That decision forced the system to rely on how it operated rather than how much raw reasoning power it could access.

The scope followed the same pattern. It stayed confined to web, API, and AI/LLM challenges, without drifting into other categories to accumulate points. There was no safety net in breadth. Progress had to come from consistent performance within that slice.

The way submissions were handled tightened things further. Hints were off the table, and there was no room for speculative attempts. Every flag had to come from a path that held up under verification. Guessing wasn’t just discouraged, but eliminated as a strategy.

Time added its own pressure. o2 did not allow itself to tunnel indefinitely on a single problem. The campaign used an explicit timeboxing doctrine:

Taken together, these constraints both increased difficulty and shaped how the system had to behave. There was no fallback to brute force, no reliance on model strength to compensate for weak execution, and no way to recover lost time through scattered effort.

Everything depended on staying controlled, deliberate, and consistent from start to finish.

o2 doesn’t approach a challenge as a single prompt or a one-shot attempt. It won by being the most disciplined inside a strategically chosen slice of the board. It focused on categories where modern AI systems can compound advantage through browser control, HTTP reasoning, exploit iteration, authentication state handling, memory reuse, and cross-challenge pattern transfer.

In short, it builds on what’s already been discovered and adjusts as the situation changes.

Each step feeds the next. When a login flow is understood, that context carries forward. When a token is exposed or a request pattern reveals something useful, it isn’t treated as an isolated finding. It becomes part of a larger path that can be extended, tested, or abandoned if it stops producing results.

Instead of restarting with every new attempt, the system moves forward with memory of what has already worked, what has failed, and what still looks promising. Partial progress is always reused.

Interaction also stays close to how modern applications behave. The system operates through browser flows when needed, handles authenticated states, and works through APIs with an understanding of how requests, tokens, and permissions interact.

When a path breaks, it doesn’t stall. The system changes focus, picks up another thread, and continues moving. Multiple lines of effort stay active, which prevents a single dead end from slowing everything down. Most importantly, nothing is submitted based on possibility alone. A path has to hold up under execution before it counts. That keeps the system grounded in outcomes rather than assumptions.

Over time, this way of operating compounds. Each step builds on the last, and progress becomes a result of accumulated context instead of isolated attempts.

Under these conditions, o2 reached #1 on the leaderboard.

That outcome on its own doesn’t say much. Leaderboards can be influenced by scope, strategy, or how broadly a system operates across categories. What makes this run different is how the result was achieved.

The system stayed within a narrow set of challenges while others operated across the full platform. It didn’t rely on hints to accelerate progress or recover from difficult paths. It didn’t fall back on speculative submissions to move forward.

Progress came from steady execution across challenges that required state, iteration, and validation. Some paths worked quickly. Others didn’t. In both cases, the system moved forward without losing continuity or overcommitting to a single direction.

There wasn’t a moment where the result hinged on a shortcut or a spike in performance. It built gradually, through consistent decisions that held up across the entire run. By the time it reached the top of the leaderboard, the pattern was already clear.

In practice, offensive security work doesn’t happen under ideal conditions. There’s limited time, incomplete information, and constant pressure to move forward without breaking context. Systems that rely on stronger models alone tend to perform well in isolated tasks, but struggle when execution has to hold across multiple steps.

But this run shows a different pattern. Performance came from staying consistent under constraint, such as managing state across interactions, carrying context forward, and turning partial progress into usable paths. The system didn’t need to expand its scope or rely on more advanced models to compensate. It stayed within a defined surface and continued producing results.

That has direct implications for how these systems are evaluated. Sustained progress, controlled execution, and verified outcomes become the signal. Systems that can hold those qualities over time produce results that stand up under real conditions.

o2 didn’t reach #1 by expanding its reach or increasing its computational advantage. It stayed within a defined scope, operated under strict constraints, and continued moving forward without losing context. Progress came from decisions that held up across the entire run, not from isolated moments of success.

Nothing about the setup made the problem easier. What it did instead was remove the usual dependencies on broader coverage, on higher-tier models, and on guess-based progress and forced the system to rely on execution alone. And that pattern holds beyond a competitive setting.

It reflects how effective offensive systems operate when conditions are less controlled, targets are less predictable, and outcomes depend on consistency over time.